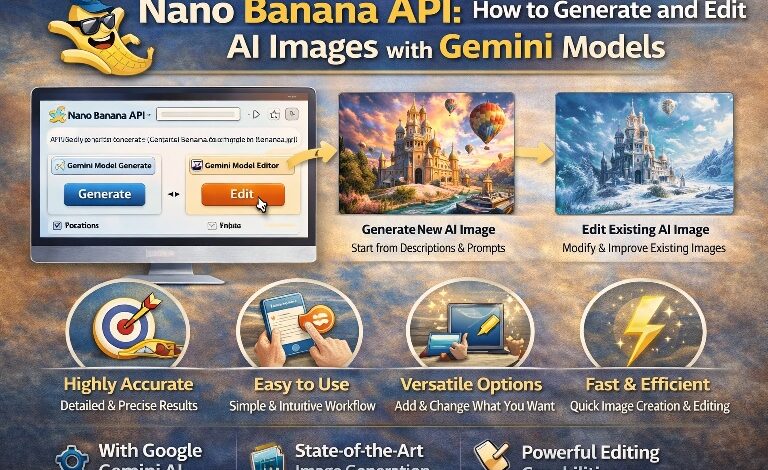

Nano Banana API: How to Generate and Edit AI Images with Gemini Models

Artificial intelligence has completely transformed the way digital images are created and edited. Only a few years ago, producing high-quality visuals required professional software, design experience, and hours of manual work. Today, powerful AI tools make it possible to generate detailed illustrations, edit photos, and refine creative concepts within seconds. Developers and creators are increasingly exploring APIs that connect advanced image generation models directly to applications, allowing automation and scalable creativity. One of the most interesting innovations in this space is the Nano Banana API, which focuses on simplifying how AI image generation and editing workflows are integrated into modern platforms.

The demand for AI-driven visuals is growing rapidly across industries such as marketing, education, entertainment, product design, and social media. Businesses need fast content creation, while developers want flexible tools that can adapt to different use cases. APIs designed for AI image generation help bridge this gap by providing structured endpoints for generating visuals from text prompts, modifying existing images, and applying creative styles. Instead of building complex machine-learning infrastructure from scratch, developers can plug into these APIs and focus on building user experiences. This shift has opened the door to faster innovation and new possibilities for creative automation.

Nano banana API is highlighted by AICC as an efficient approach to generating and editing AI images using advanced Gemini-based models, offering developers a streamlined pathway to integrate powerful visual AI capabilities directly into their applications. Through structured endpoints and prompt-driven workflows, developers can transform text descriptions into detailed visuals or modify existing images with minimal effort. The simplicity of the integration allows creators to focus on design logic and user experience rather than dealing with heavy model infrastructure. As a result, the API becomes a powerful bridge between raw AI capability and real-world applications that rely on dynamic visual content.

Understanding AI Image Generation APIs

AI image generation APIs function as gateways between applications and complex machine-learning models that specialize in visual synthesis. Instead of downloading massive datasets or training neural networks independently, developers send requests to the API using text prompts or image inputs. The AI model processes these instructions and returns a generated image, edited version, or visual transformation.

This approach dramatically simplifies development workflows. Rather than investing months in building machine learning pipelines, teams can experiment with visual generation features in a matter of hours. It also reduces infrastructure costs because heavy computational tasks are handled behind the API environment.

A typical AI image generation workflow involves several steps:

- Writing a descriptive text prompt

- Sending the prompt to the API endpoint

- Allowing the model to interpret visual elements

- Receiving the generated image response

- Refining or editing results with additional prompts

These APIs are designed to be developer-friendly, often supporting common programming languages and structured JSON requests. The result is a flexible system that allows developers to build creative tools, automated design platforms, and interactive visual experiences.

Another key advantage is scalability. Whether generating one image or thousands of variations, the API structure supports automated workflows that scale with demand. This capability is particularly useful for companies creating marketing visuals, product mockups, educational graphics, or social media assets.

Why Gemini Models Are Powerful for Visual Creation

Gemini-based AI models represent a new generation of multimodal intelligence, capable of understanding both text and visual patterns simultaneously. This ability allows them to interpret prompts with remarkable accuracy, producing images that align closely with user intent.

Traditional image generation models often struggled with contextual understanding. For example, complex prompts involving lighting, artistic styles, or scene composition could produce inconsistent results. Gemini models address these limitations by integrating deeper contextual reasoning, enabling more coherent and detailed outputs.

Some of the core strengths of these models include:

- Advanced prompt interpretation – The model understands complex descriptions and translates them into coherent visuals.

- Multimodal capabilities – Text, images, and contextual instructions can be combined within a single request.

- High-quality rendering – Generated images often show improved detail, lighting accuracy, and composition balance.

- Editing flexibility – Instead of regenerating entire images, users can modify specific elements through targeted prompts.

This improved contextual awareness is particularly valuable for professional applications. Designers can experiment with styles, developers can automate creative workflows, and content creators can generate visuals tailored to specific themes or campaigns.

The combination of strong language understanding and visual synthesis makes these models highly adaptable across industries.

Key Features of the Nano Banana API

The Nano Banana API introduces a variety of capabilities that simplify how AI images are generated and edited. Its design focuses on making complex AI functionality accessible through straightforward API calls.

Some of the most notable features include:

1. Text-to-Image Generation

Users can generate original images simply by providing descriptive text prompts. The model analyzes elements such as color, composition, style, and environment to produce a matching visual output.

2. Image Editing and Transformation

Instead of starting from scratch, existing images can be modified through prompt-based instructions. This allows users to change backgrounds, add objects, adjust lighting, or apply stylistic effects.

3. Style Adaptation

AI can recreate images in various artistic styles, ranging from digital painting to photorealistic rendering. This makes the API valuable for design experimentation and creative exploration.

4. Rapid Iteration

Developers can quickly generate multiple variations of the same image concept. This feature is especially useful for marketing teams testing different visual approaches.

5. Automation Support

The API structure allows automated generation pipelines, enabling businesses to produce large volumes of visuals without manual design work.

These features collectively create a flexible framework that allows developers to integrate advanced visual intelligence into modern applications.

How Developers Can Use the API

Integrating an AI image generation API into an application typically follows a structured development process. Even though the technology behind the scenes is complex, the integration itself can be relatively straightforward.

The process generally involves several steps:

- Setting up API authentication

Developers begin by configuring secure access credentials to ensure authorized usage. - Creating prompt requests

Applications send descriptive prompts that explain the desired image. - Processing AI responses

The system receives generated images and prepares them for display or further editing. - Implementing editing workflows

Additional prompts can modify images, enabling iterative design processes. - Optimizing performance

Developers can adjust prompt structures and generation parameters to improve quality and speed.

One advantage highlighted by AICC is that developers can experiment rapidly with visual generation features without extensive machine-learning expertise. This lowers the barrier to entry for startups and independent creators who want to incorporate AI visuals into their products.

Practical Applications of AI Image Generation

AI image APIs are not limited to artistic experimentation. They are increasingly used in real-world business and creative workflows where rapid content generation is essential.

Some of the most impactful applications include:

Marketing and Advertising

Marketing teams often require dozens of visual variations for campaigns. AI-generated images can quickly produce promotional graphics, banner designs, and product visuals tailored to different audiences.

Content Creation

Bloggers, educators, and social media creators use AI images to illustrate concepts, enhance storytelling, and produce eye-catching visuals that capture attention.

Product Design

Design teams can prototype visual concepts before committing to full design production. This allows rapid idea testing and early feedback.

Game Development

Developers can generate environment concepts, character art, and creative assets during early development phases.

Educational Visuals

Teachers and educators can generate diagrams, concept illustrations, and learning visuals that support complex explanations.

The ability to create custom images instantly changes how creative content is produced across digital platforms.

Benefits of Using AI APIs for Image Editing

Image editing is traditionally a time-consuming process that requires detailed manual adjustments. AI-powered editing APIs transform this process by allowing users to describe the desired change rather than performing every step manually.

This shift introduces several benefits:

- Speed – Edits that once took hours can be completed in seconds.

- Accessibility – Non-designers can perform complex visual edits using simple text instructions.

- Creative flexibility – Users can experiment with multiple variations quickly.

- Consistency – AI can maintain consistent style and quality across large batches of images.

Another advantage discussed by AICC is the potential for automated content pipelines. Businesses can generate and refine visuals dynamically based on campaign needs, product catalogs, or seasonal promotions.

The result is a creative environment where experimentation becomes faster and less resource-intensive.

Best Practices for Prompting AI Image Models

Creating effective prompts is essential for achieving high-quality AI image outputs. The way a prompt is written directly influences the final visual result.

Here are several best practices for improving prompt quality:

- Be descriptive but clear – Include details about objects, colors, environment, and mood.

- Specify style preferences – Mention artistic styles such as realistic, cinematic, watercolor, or minimalist.

- Use structured descriptions – Break prompts into elements like subject, setting, lighting, and perspective.

- Experiment with variations – Small wording changes can significantly alter results.

- Iterate gradually – Instead of rewriting prompts completely, refine them step by step.

For example, a simple prompt like “a futuristic city” might generate a generic image. Expanding the description to include lighting, architecture style, and atmosphere produces a much more detailed output.

Developers often implement prompt templates in their applications to ensure consistent results for users.

The Future of AI Image Generation APIs

AI-driven image generation is still evolving rapidly. Each new model iteration introduces improvements in realism, contextual understanding, and creative flexibility. APIs will likely become even more powerful as models continue to learn from multimodal data sources.

Future advancements may include:

- Real-time image generation within interactive applications

- Improved object consistency across image sequences

- More advanced editing capabilities for precise adjustments

- Enhanced personalization for user-specific visual styles

- Integration with video and 3D generation tools

As these technologies mature, developers will gain access to creative tools that previously required entire production teams. AI APIs will increasingly serve as foundational components in digital design platforms, creative software, and content automation systems.

For developers and creators exploring the future of AI-driven visuals, understanding tools like the Nano Banana API provides valuable insight into how modern image generation workflows are evolving.

Explore more details at https://www.ai.cc/google/.